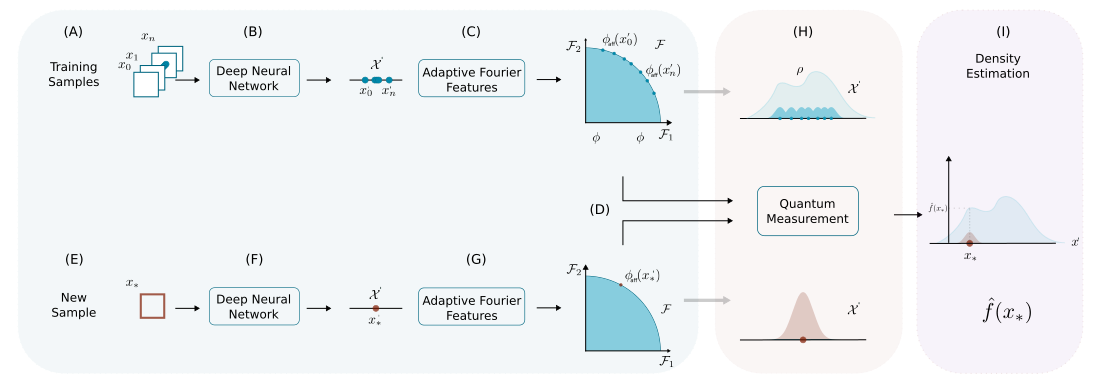

DEMANDE (Density Matrix NEural Density Estimation) is a neural density estimation method based on density matrices and adaptive Fourier features. It provides a flexible machine-learning approach to estimate probability density functions from data, grounded in the mathematical formalism of density matrices commonly used in quantum mechanics. (IEEE)

Traditional density estimation methods like Kernel Density Estimation (KDE) scale poorly with dimensionality and dataset size. DEMANDE models densities using density matrices combined with adaptive Fourier feature maps, yielding a scalable, data-driven estimator that can be integrated with deep learning tools and evaluated efficiently. (IEEE)

- Density matrix representation of probability distributions

- Adaptive Fourier features to approximate kernels and embed data

- Python implementation with example notebooks

- Modular and extensible for research and experiments

- Includes tests and baseline usage examples

Install Miniconda from here and then run the following commands to create the demande environment:

conda env create -f environment.yml

conda activate demandeNext, install the package:

pip install -e .or if you want development dependencies as well:

pip install -e .[dev]mkdir reports mlflow data

All the experiments will be saved on Ml-flow in the following path using sqlite: mlflow/

mkdir mlflow/After running your experiments, you can launch the ml-flow dashboard by running the following command:

mlflow ui --port 8080 --backend-store-uri sqlite:///mlflow/tracking.dbThe dataset is publicly available in Zenodo

The dataset contains the features and probabilities of ten different functions.

Each dataset is saved using NumPy arrays.

The dataset Arc corresponds to a two-dimensional random sample drawn from a random vector

with probability density function

where

:contentReference[oaicite:0]{index=0} used this dataset to evaluate neural density estimation methods (2017).

The dataset Potential 1 corresponds to a two-dimensional random sample drawn from a random vector

with probability density function

The normalizing constant is approximately 6.52, calculated using Monte Carlo integration.

The dataset Potential 2 corresponds to a two-dimensional random sample drawn from a random vector

with probability density function

where

The normalizing constant is approximately 8, calculated using Monte Carlo integration.

The dataset Potential 3 corresponds to a two-dimensional random sample drawn from a random vector

with probability density function

where

and

The normalizing constant is approximately 13.9, calculated using Monte Carlo integration.

The dataset Potential 4 corresponds to a two-dimensional random sample drawn from a random vector

with probability density function

where

and

The normalizing constant is approximately 13.9, calculated using Monte Carlo integration.

The dataset 2D mixture corresponds to a two-dimensional random sample drawn from

with probability density

where

and

The dataset 10D-mixture corresponds to a 10-dimensional random sample drawn from

with a mixture of four diagonal normal probability density functions

where

- each

$\mu_i$ is drawn uniformly from the interval$[-0.5, 0.5]$ - each

$\sigma_i$ is drawn uniformly from the interval$[-0.01, 0.5]$

Each component of the mixture is selected with probability

📦 demande

├── src/ # Core implementation

├──── configs/

├──── dataset_utils/

│ ├── generators/

│ ├── probability_estimators/

├──── mlflow_utils/

├──── models/

│ ├── demande/

│ └── normalizing_flows/

├──── training/

│ ├── model_building/

├──── utils/

├──── visualizations/

├── notebooks/ # Python scripts to run demos

├── tests/ # Unit and integration tests

├── data/ # Example dataset

├── pyproject.toml

├── environment.yaml

├── README.md

└── LICENSE

Run the test suite:

pytest tests/DEMANDE builds on the idea of density matrices as probability density estimators, with roots in kernel and random Fourier feature methods. ([Fast Kernel Density Estimation][https://link.springer.com/chapter/10.1007/978-3-031-22419-5_14])

If you use the ideas of this code in your research, please cite:

@article{gallego2023demande,

title={DEMANDE: Density Matrix Neural Density Estimation},

author={Gallego-Meji{\'a}, Joseph A. and Gonz{\'a}lez, Fabio A.},

journal={IEEE Access},

year={2023}

}

If you use this code in your research, please cite:

@article{gallego2023demandedataset,

title={Demande dataset},

author={Gallego-Mejia, Joseph A and Gonzalez, Fabio A},

year={2023},

publisher={Zenodo}

}

Contributions are welcome! Please open issues for bugs, feature requests, or improvements.

- Fork the repo

- Create a feature branch

- Add tests for new behavior

- Submit a pull request

This project is licensed under the MIT License.

[1]: Gallego-Mejia, J. A., & González, F. A. (2023). Demande: Density matrix neural density estimation. IEEE access, 11, 53062-53078.