What did you realize when you tried to submit your predictions? What changes were needed to the output of the predictor to submit your results?

Changing in time limits, evaluation metrics, presets come with different results. If any of my predictor have negative value, I've to change them into zero as Kaggle don't accept negative values as submission.

WeightedEnsemble_L3

Exploratory data analysis helps visulize and analyse data for better understanding.

How much better did your model preform after adding additional features and why do you think that is?

Moderately and that was for changed in hyperparameters.

0.20

In the time limit parameter.

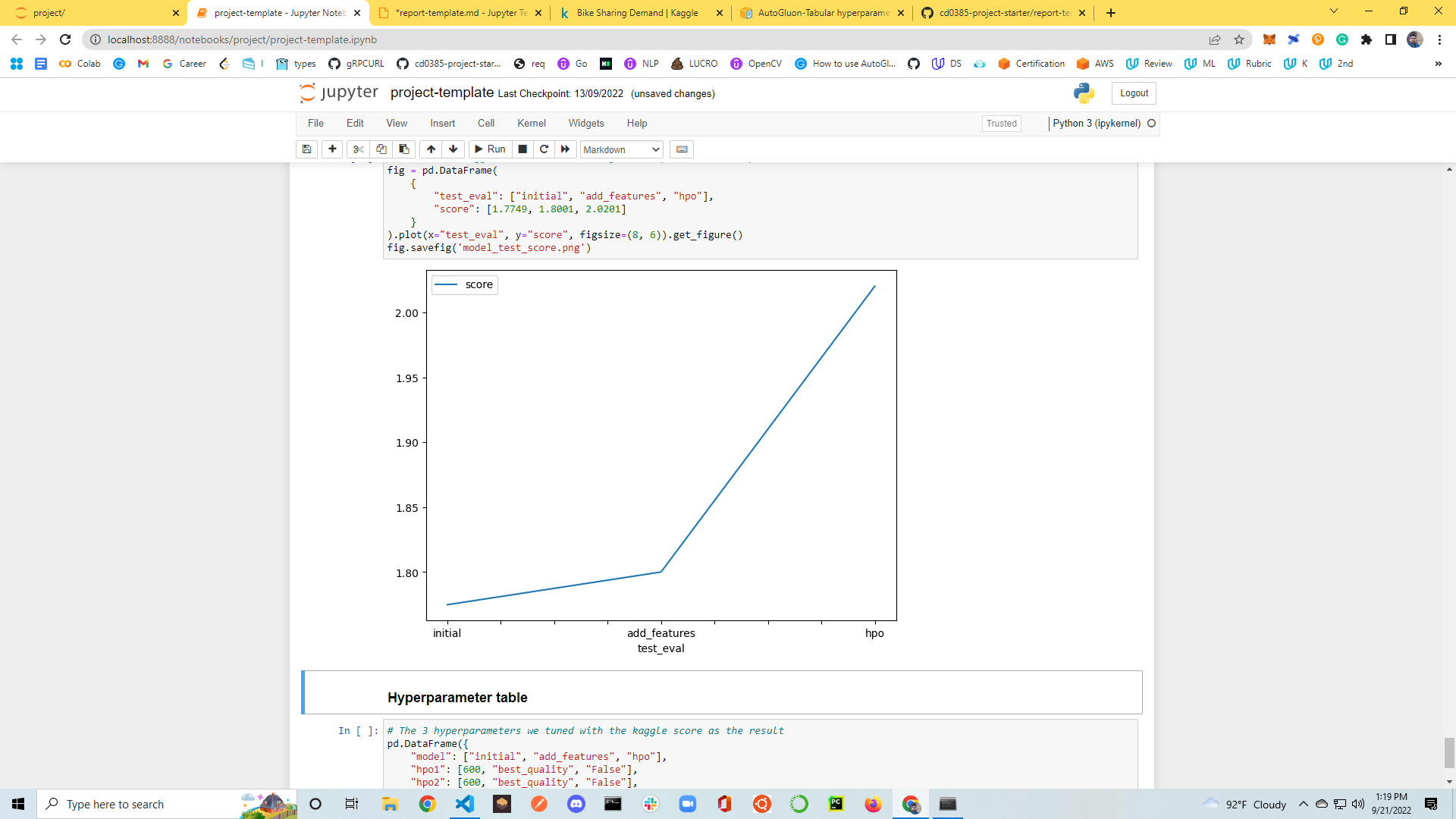

| model | hpo1 | hpo2 | hpo3 | score |

|---|---|---|---|---|

| initial | 600 | "best_quality" | "False | 1.7749 |

| add_features | 600 | "best_quality" | "False | 1.8001 |

| hpo | 600 | "high_quality" | "True" | 2.0201 |

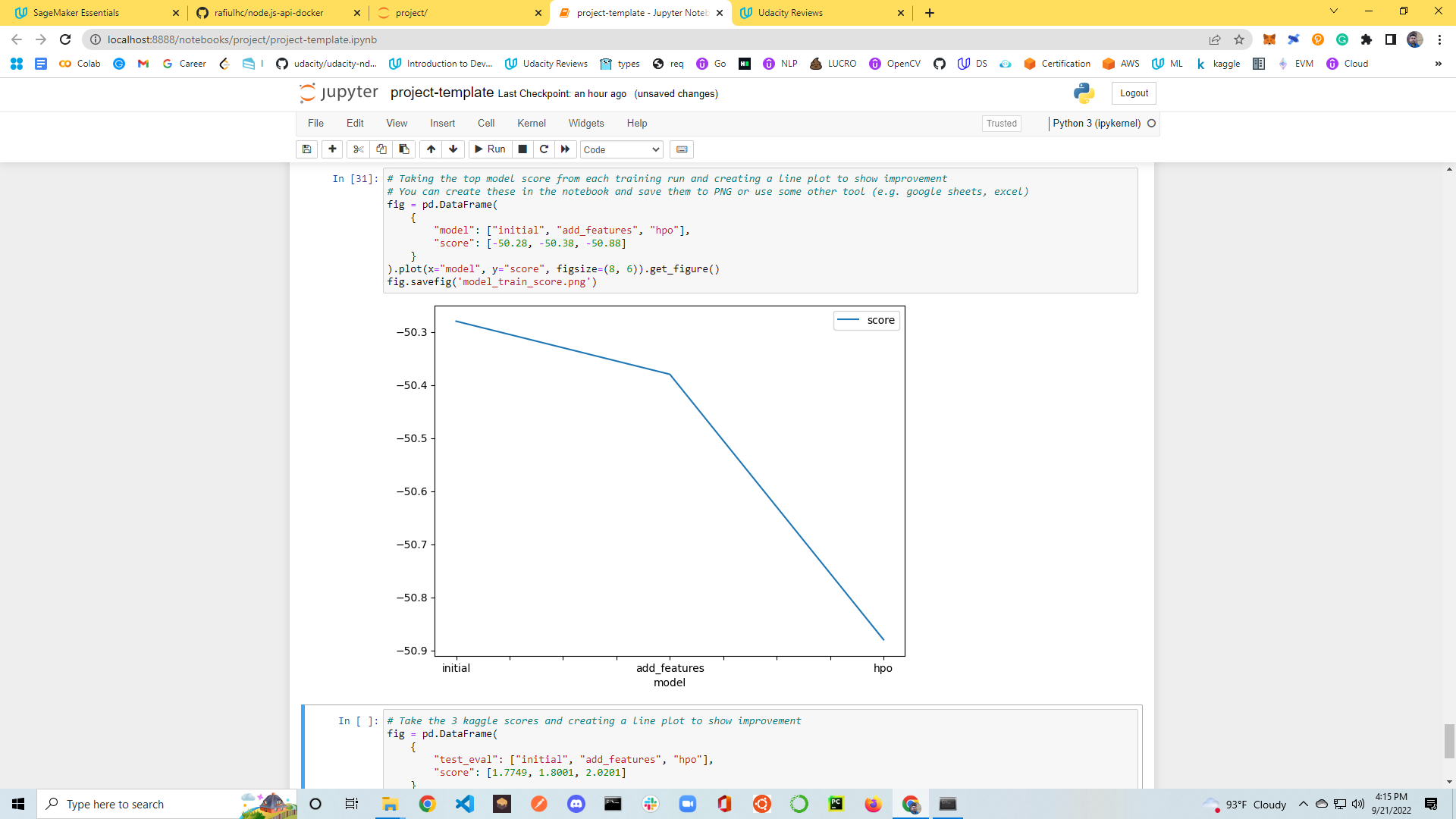

Create a line plot showing the top model score for the three (or more) training runs during the project.

Create a line plot showing the top kaggle score for the three (or more) prediction submissions during the project.

AutoGluon is a beginer frienfly AutoML tool that uses to train extremely accurate machine learning models on unprocessed tabular datasets like CSV files. AutoGluon succeeds by assembling several models and stacking them in various layers, unlike other AutoML frameworks that largely focus on model/hyperparameter selection. With hyperparameter tuning we can find better score.