SparkMonitor is a Jupyter extension for monitoring Apache Spark jobs launched from notebooks. It displays live Spark metrics directly in the notebook interface, making it easier to understand, debug, and profile Spark workloads as they run.

It supports JupyterLab and classic Jupyter Notebook with PySpark 3.x and 4.x.

|

+ |

|

= |

|

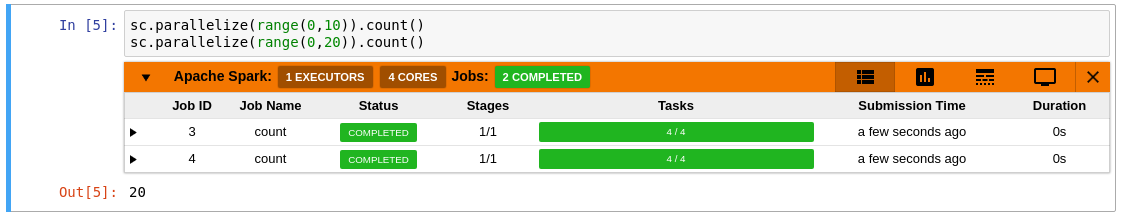

SparkMonitor adds an interactive monitoring panel below notebook cells that trigger Spark jobs, so you can inspect execution progress without leaving the notebook.

- Python 3.x

- PySpark 3.x or 4.x

- JupyterLab 4.x or classic Jupyter Notebook 4.4.0 or later

- Spark Classic API mode

- SparkMonitor works with the traditional Spark driver model used by PySpark.

- It is not compatible with Spark Connect.

- Live monitoring of Spark jobs launched from a notebook cell

- Job and stage table with progress bars and execution details

- Timeline view showing jobs, stages, and tasks over time

- Resource graphs for active tasks and executor core usage

|

|

|

Create and activate a virtual environment:

python -m venv venv

source venv/bin/activateInstall SparkMonitor together with PySpark and a notebook frontend.

pip install sparkmonitor pyspark jupyterlabEnable the SparkMonitor IPython kernel extension:

ipython profile create

echo "c.InteractiveShellApp.extensions.append('sparkmonitor.kernelextension')" >> "$(ipython profile locate default)/ipython_kernel_config.py"This only needs to be done once per IPython profile.

To use SparkMonitor, create your Spark session with the SparkMonitor listener enabled.

This requires two Spark configurations:

| Configuration | Purpose |

|---|---|

spark.extraListeners |

Registers the SparkMonitor listener that collects Spark job metrics |

spark.driver.extraClassPath |

Points to the SparkMonitor listener JAR bundled with the sparkmonitor package |

If you already know the exact path to the matching SparkMonitor

listener JAR in your current environment, you can set

spark.driver.extraClassPath directly:

from pyspark.sql import SparkSession

spark = (

SparkSession.builder.config(

"spark.extraListeners",

"sparkmonitor.listener.JupyterSparkMonitorListener",

)

.config(

"spark.driver.extraClassPath",

# Put the path to the matching SparkMonitor listener JAR here.

"venv/lib/python3.13/site-packages/sparkmonitor/listener_spark4_2.13.jar",

)

.getOrCreate()

)The most robust approach is to resolve the listener JAR path

dynamically from the installed Python package instead of hardcoding the

full environment path. The example below first checks SPARK_HOME and

then falls back to the pyspark package layout used by

pip install pyspark, where SPARK_HOME is often not set:

import os

from pathlib import Path

import pyspark

import sparkmonitor

from pyspark.sql import SparkSession

def iter_spark_jar_dirs() -> list[Path]:

candidates = []

spark_home = os.environ.get("SPARK_HOME")

if spark_home:

candidates.append(Path(spark_home) / "jars")

candidates.append(Path(pyspark.__file__).resolve().parent / "jars")

return [path for path in candidates if path.exists()]

def resolve_listener_jar(sparkmonitor_dir: Path) -> Path:

for jars_dir in iter_spark_jar_dirs():

for jar in jars_dir.glob("spark-core_*.jar"):

# spark-core_2.13-3.5.8.jar => scala=2.13, spark_major=3

scala_ver, spark_ver = jar.name.split("_")[1].split("-")[:2]

spark_major = spark_ver.split(".")[0]

if spark_major == "3" and scala_ver == "2.12":

return sparkmonitor_dir / "listener_spark3_2.12.jar"

if spark_major == "3" and scala_ver == "2.13":

return sparkmonitor_dir / "listener_spark3_2.13.jar"

if spark_major == "4" and scala_ver == "2.13":

return sparkmonitor_dir / "listener_spark4_2.13.jar"

raise RuntimeError(

"Could not detect Spark/Scala version from SPARK_HOME or the pyspark installation"

)

sparkmonitor_dir = Path(sparkmonitor.__file__).resolve().parent

listener_jar = resolve_listener_jar(sparkmonitor_dir)

spark = (

SparkSession.builder.config(

"spark.extraListeners",

"sparkmonitor.listener.JupyterSparkMonitorListener",

)

.config("spark.driver.extraClassPath", str(listener_jar))

.getOrCreate()

)Important

The correct listener JAR depends on:

- the location of your Python environment

- your Spark major version and Scala version

- how Spark is installed (

SPARK_HOMEvspip install pyspark)

You can inspect the installed package location with:

import sparkmonitor

print(sparkmonitor.__path__)Then locate the corresponding listener JAR in that package directory:

listener_spark3_2.12.jarfor Spark 3 + Scala 2.12listener_spark3_2.13.jarfor Spark 3 + Scala 2.13listener_spark4_2.13.jarfor Spark 4 + Scala 2.13

If needed, you can also build the listener JAR yourself with sbt, as

described in the development section below.

To work on SparkMonitor locally:

# Install the package in editable mode

pip install -e .

# Build the frontend (see package.json for available scripts)

yarn run build:<action>

# Link the JupyterLab extension into your local Jupyter environment

jupyter labextension develop --overwrite .

# Watch frontend files for changes

yarn run watch

# Build the Spark listener JARs

cd scalalistener_spark3 # Spark 3 / Scala 2.12 and 2.13

sbt +package

cd ../scalalistener_spark4 # Spark 4 / Scala 2.13

sbt packageCheck the following:

sparkmonitoris installed in the same Python environment as your notebook kernelpysparkis installedjupyterlabornotebookis installed- the IPython kernel extension is enabled

- your Spark session includes

spark.extraListeners spark.driver.extraClassPathpoints to a valid listener JAR- you are using Spark Classic, not Spark Connect

The listener JAR must match both your Spark major version and Scala version:

Spark 3 + Scala 2.12 -> listener_spark3_2.12.jar

Spark 3 + Scala 2.13 -> listener_spark3_2.13.jar

Spark 4 + Scala 2.13 -> listener_spark4_2.13.jar

Using the wrong listener JAR may prevent the listener from loading correctly.

Avoid hardcoding paths when possible. Environment-specific paths vary across systems, Python versions, and virtual environments. The dynamic path resolution example above is usually more portable.

This is expected in some setups, especially when Spark comes from

pip install pyspark. In that case, use the pyspark package location

to find the bundled Spark JARs instead of assuming SPARK_HOME/jars

exists.

- The first version of SparkMonitor was written by krishnan-r as a Google Summer of Code project with the SWAN Notebook Service team at CERN.

- Further fixes and improvements were made by the CERN team and community contributors in swan-cern/jupyter-extensions/tree/master/SparkMonitor.

- Jafer Haider updated the extension for JupyterLab during an internship at Yelp.

- Work from the jupyterlab-sparkmonitor fork was later merged into this repository so that both JupyterLab and Jupyter Notebook are supported from a single package.

- Ongoing maintenance and development continue through the SWAN team at CERN and the community.

- PyPI package: sparkmonitor

- Releases: swan-cern/sparkmonitor releases